ChatPromptTemplate的用法

(图片来源网络,侵删)

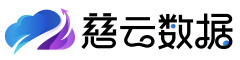

用法1:

from langchain.chains import LLMChain

from langchain_core.output_parsers import StrOutputParser

from langchain_core.prompts import ChatPromptTemplate

from langchain_community.tools.tavily_search import TavilySearchResults

from langchain.chains import LLMMathChain

prompt= ChatPromptTemplate.from_template("tell me the weather of {topic}")

str = prompt.format(topic="shenzhen")

print(str)

打印出:

Human: tell me the weather of shenzhen

最终和llm一起使用:

(图片来源网络,侵删)

import ChatGLM

from langchain.chains import LLMChain

from langchain_core.output_parsers import StrOutputParser

from langchain_core.prompts import ChatPromptTemplate

from langchain_community.tools.tavily_search import TavilySearchResults

from langchain.chains import LLMMathChain

prompt = ChatPromptTemplate.from_template("WHO is {NAME}")

# str = prompt.format(name="Bill Gates")

# print(str)

llm = ChatGLM.ChatGLM_LLM()

output_parser = StrOutputParser()

chain05 = prompt| llm | output_parser

print(chain05.invoke({"name": "Bill Gates"}))

用法2:

import ChatGLM

from langchain_core.output_parsers import StrOutputParser

from langchain_core.prompts import ChatPromptTemplate

prompt = ChatPromptTemplate.from_messages([

("system", "You are a helpful AI bot. Your name is {name}."),

("human", "Hello, how are you doing?"),

("ai", "I'm doing well, thanks!"),

("human", "{user_input}"),

])

llm = ChatGLM.ChatGLM_LLM()

output_parser = StrOutputParser()

chain05 = prompt| llm | output_parser

print(chain05.invoke({"name": "Bob","user_input": "What is your name"}))

也可以这样:

import ChatGLM

from langchain_core.output_parsers import StrOutputParser

from langchain_core.prompts import ChatPromptTemplate

llm = ChatGLM.ChatGLM_LLM()

prompt = ChatPromptTemplate.from_messages([

("system", "You are a helpful AI bot. Your name is {name}."),

("human", "Hello, how are you doing?"),

("ai", "I'm doing well, thanks!"),

("human", "{user_input}"),

])

# a = prompt.format_prompt({name="Bob"})

a = prompt.format_prompt(name="Bob",user_input="What is your name")

print(a)

print(llm.invoke(a))

以下也是一个例子:

import gradio as gr

from langchain_core.prompts import ChatPromptTemplate

from LLMs import myllm

from langchain_core.output_parsers import StrOutputParser

llm = myllm()

parser = StrOutputParser()

template = """{question}"""

prompt = ChatPromptTemplate.from_template(template)

chain = prompt | llm | parser

def greet3(name):

return chain.invoke({"question": name})

def alternatingly_agree(message, history):

return greet3(message)

gr.ChatInterface(alternatingly_agree).launch(server_name="0.0.0.0",share=False)

参考: https://Python.langchain.com/docs/modules/model_io/prompts/quick_start

HTTPS://python.langchain.com/docs/modules/model_io/prompts/composition